Inside my AI design engineering workflow

Author:

Max Bailey

Published: May 14, 2026

15 minutes to read

AI design tools produce impressive output. Most of it isn’t shippable.

Not because the code is bad - it usually isn’t. Because it’s disconnected. Components get reinvented when the real ones exist a few feet away in your codebase. Tokens get fabricated when your design system has them defined. Visual bugs survive every iteration because the agent has no way to see them.

This post is about the workflow that closes that gap.

TL;DR

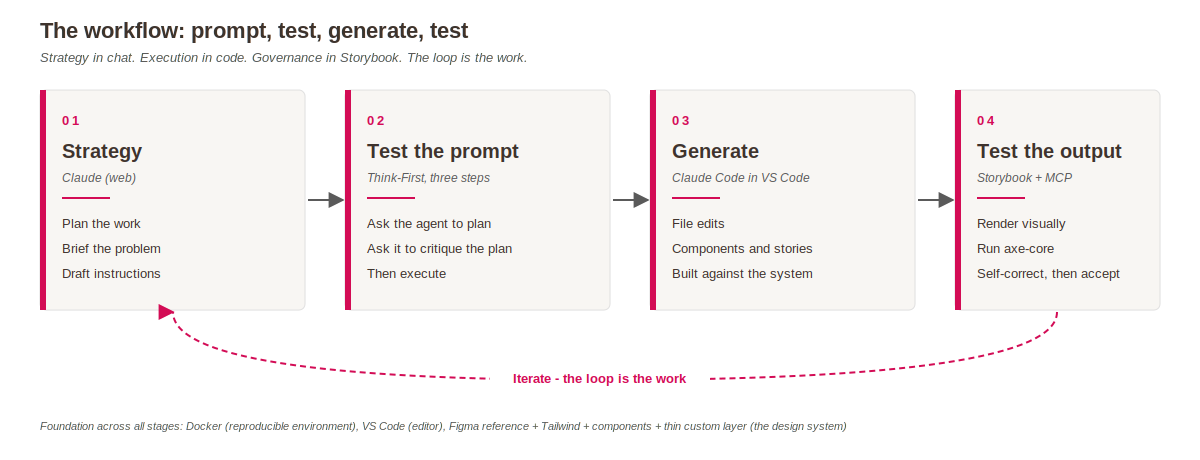

Four phases (strategy, test the prompt, generate, test the output) running across five tools (Docker, VS Code, Claude web, Claude Code, Storybook with MCP). The principle behind all of them: codify your standards as infrastructure, give the agent a feedback loop, trust the loop only after you’ve tested both ends.

Result: AI does the typing, the design system does the thinking, and the loop catches what slips.

AI doesn’t know what it didn’t notice

To make this concrete, I ran an exercise.

I asked Claude Design - a tool focused on the concept phase - to build an enterprise Work Order Assignment form. Detailed prompt: 8 fields, conditional logic (extra fields appearing when Priority is set to Urgent), inline validation, WCAG 2.2 AA accessibility, design system tokens. Then three rounds of iteration: accessibility, conditional logic, design tokens.

26 minutes 30 seconds. Four iterations. Genuinely impressive code on the surface.

Three things were good:

- Real accessibility wiring. A reusable Field wrapper with a

describedByForhelper that conditionally joined help and error ids. Error elements withrole="alert"for announcement. Visually hidden “required” text for screen readers. - Conditional logic that worked. Setting Priority to Urgent surfaced the extra fields with proper validation, wrapped in

aria-live="polite"for screen reader announcement. - A comprehensive CSS custom property system. Semantic naming (

--fg,--surface,--accent,--danger), spacing, typography, borders, shadows, z-index, even component-specific dimensions like--avatar-smand--target-min.

Three things survived all three iterations and were never fixed:

- Visual focus state on form fields was weak from the first generation and stayed weak. The aria attributes were correct, but the rendered output didn’t make focus obvious to a sighted user. No iteration noticed.

- The date and time picker had an unwanted scrollbar from the first generation. Three iterations later, still there.

- The Priority dropdown shipped with awkward sub-labels (“Schedule when convenient”, “Normal queue”, “Same-week response”, “Requires manager approval”). No iteration touched them.

Three things were never possible:

- The components produced were reinvented from scratch. Claude Design has no access to my project’s design system - where real Combobox, DatePicker, and Field components already exist - so the agent built its own versions instead.

- The tokens were invented. Claude Design has no design system to reference, so it has to make them up. Plausible semantic naming, but nothing that maps to a real deployed system.

- No automated validation. I had to read the code to verify the wiring. axe-core never ran. No interaction tests ever executed.

Three rounds of iteration. Persistent UX issues. Reinvented components. Fabricated tokens. No tests.

The lesson: iterations only fix what you explicitly ask for. The agent doesn’t know what it didn’t notice. Without a feedback loop tied to real output, problems survive multiple rounds of refinement.

Design decisions are infrastructure

Hardik Pandya, Senior AI Principal at Atlassian, put this well in a recent post: design decisions should be treated as infrastructure. Every decision should find a path into a format the AI can consume. I’ve been building this in production on my current project without knowing he’d written it up. The same idea, two angles.

The principle: codify standards as infrastructure, give the agent a feedback loop, trust the loop only after you’ve tested both ends.

Three things turn this from idea into practice:

1. Reference established in Figma first. Figma is still the right place for visual decisions, design conversations, and the source of truth designers and stakeholders react to. The reference file isn’t the design system itself - it’s the brief that informs the system.

2. Standard primitives in code, not custom from scratch - and I didn’t pick them alone. This is the bit worth being honest about. The component library choices on my current project came from Ahmed Youssef, the architect on the team. Tailwind for the web. For the native mobile side of the same project, Ahmed introduced me to NativeWind - a library I didn’t know existed until that conversation.

The lesson: talk to people. Especially the architects who’ve already made these decisions on something hard. If I’d tried to make these choices alone, I’d have been weeks slower and the decisions would have been worse.

3. Thin custom layer on top. The custom work is the bit unique to the project. Everything else is composition. The agent-friendly stack is, almost by accident, also the easier-to-maintain stack - because agents and human maintainers benefit from the same thing: code that looks like other code.

The setup: five tools, each with one job

| Tool | Job |

| — | — |

| Docker | Reproducible environment |

| VS Code | Editor |

| Claude (web) | Strategy |

| Claude Code | Execution |

| Storybook + MCP | Governance |

Why this stack:

- Docker - same Node, same deps, same Storybook on any machine. Containers also mean the AI operates in a known context, not whatever’s installed on my laptop.

- VS Code - where the files live, where I read code, run tests, debug.

- Claude (web) - thinking out loud, planning, drafting briefs, writing instructions. Done away from the codebase to avoid muddled prompts.

- Claude Code - file edits, terminal, generating components against the design system.

- Storybook + MCP - components live here with axe-core. The MCP server (released March 24, 2026) exposes the manifest, stories, and tests to the agent.

Each layer does something the others can’t. Strategy lives in chat. Execution lives in code. Governance lives in Storybook. Reproducibility lives in Docker. Try to do strategy in Claude Code and you waste tokens reasoning out loud in the codebase. Try to do governance in chat and you have nothing to test against.

One thing worth naming up front

The concept phase happens before this workflow starts - the bit where you’re working out what to build. That’s a different kind of work with its own set of tools: Figma, Claude Design, existing references, brand guidelines, wireframes, sketches. Lots of valid ways to get there. This post isn’t about that phase. It’s about what comes after - the moment you’ve decided what to build and need to deliver it well, repeatedly, with governance.

The workflow: four phases, and order matters

1. Strategy in Claude (web). I start in chat, away from the codebase. What’s the user trying to do? What components already exist that I should reuse? Where are the edge cases? What does “done” look like? This is the ambiguity layer - back-and-forth conversation, surfacing assumptions, pushing back on first drafts. Doing this in Claude Code burns tokens on reasoning that’s better done in plain prose.

2. Test the prompt. Before any code is generated, I test the instruction. The pattern is Think-First in three steps:

- Ask the agent to produce a plan, not the work

- Ask the agent to critique its own plan

- Then, and only then, give the green light to execute

This catches drift before it costs anything. If the plan is wrong, refine the prompt and run it again. The output is a tight, tested instruction set ready to hand to Claude Code.

3. Generate in Claude Code. Instructions go into Claude Code in VS Code. Claude Code reads the design system via Storybook MCP, pulls in the relevant components, and generates the work. File edits, new components, story files, test cases. Built against actual standards, with actual tests available.

4. Test the output. This is where most AI workflows fall over - they stop after generation.

- Storybook renders the component visually. I can see it.

- Axe-core runs accessibility checks. Violations show up in the panel.

- Storybook MCP runs interaction tests. Right now I copy failures from the panel into Claude Code, which writes the fix prompts. When patterns emerge, I have Claude Code audit broader sets of components against them. Full agent-driven self-correction is the direction - I haven’t got that stable end-to-end yet.

- I review what came out and either accept, reject, or push back with new context.

The agent has eyes on the output. So do I. Real output is the ground truth, not the description.

Why the order matters: plenty of teams have all five tools. What they don’t have is the discipline to use them in the right order. Generate before testing the prompt → wasted cycles on bad output. Test the output without governance baked in → the loop runs but the standards drift. Skip strategy → fuzzy prompts and fuzzy output.

Before and after: the same prompt, with and without the loop

| Scenario | Claude Design (no design system, no tests) | This workflow (Storybook MCP + axe-core + design system manifest) |

|---|---|---|

| Components | Reinvents Combobox, DatePicker, Field from scratch | Reuses existing components from the manifest |

| Tokens | No design system to reference; fabricates its own semantic names | Picks from the real token set in the manifest |

| Accessibility violations | I have to read the code to find them. axe-core never runs | Surfaces in the Storybook panel after every generation |

| Visual bugs (focus state, scrollbar) | Survive every iteration. Agent can’t see them | Surface in the panel; failures fed back to the agent for fix prompts |

| New AI session | Starts from zero context, makes new fictional choices | Reads the spec files and manifest, makes the same choices |

| Ready to ship | Would need full translation pass before merging | Built against the real codebase, ready to PR |

Honest caveats

A few things are worth naming.

Storybook MCP is still in preview. React only at launch, with other frameworks coming later this year. The API may change. If you’re betting your team’s workflow on this today, treat it as “best current option” not “permanent answer.” That’s true of most of the AI tooling stack right now.

The workflow has rough edges. Context doesn’t always carry cleanly from web Claude into Claude Code - I sometimes find myself re-explaining the brief in different ways for different tools. Token budgets matter. Long sessions in Claude Code can degrade as the context fills up, so I’m careful about when to start fresh.

Not every design system task fits this model. The workflow is built for component-led work - building, testing, iterating on UI components against a defined design system. For deep visual exploration, brand creative, or designing patterns that don’t yet exist anywhere, the tools are less useful and human judgement matters more. Don’t try to force a feedback loop onto work that’s still in discovery.

This is what’s working for me, on my current project, in May 2026. By August it’ll have moved on. The principles are durable. The specific tools are not.

Why this matters

If you abstract away the specific tools, what’s left is a principle: codify your standards as infrastructure, give the agent a feedback loop, trust the loop only after you’ve tested both ends of it.

Codified standards mean tokens, prop contracts, design system components, axe-core gates - the things that define “what good looks like” in a form an agent can read. Without them, the agent invents its own answer and you spend the next sprint cleaning up the drift.

A feedback loop means the agent can see what it built, run real tests against it, and self-correct based on real output. Not just description. Real rendering. Real accessibility violations. Real interaction tests.

Trusting the loop means the human stops policing every individual output and starts policing the system that produces them. Less time reviewing components, more time defining what makes a component good in the first place. That’s where the design engineering job is actually heading - and it’s a more interesting version of the role, not a smaller one.

What’s next

The practice keeps evolving.

Automated test-runner for CI gates. The current workflow gives me axe-core in the Storybook UI as I work - that catches violations during development. The next layer is @storybook/test-runner running headless accessibility checks against every story, gated in CI. Same principle applied at the team level rather than the individual level.

Other framework support for Storybook MCP is due later this year. When the Vue or Angular version lands, the workflow translates with minimal change - the principle holds even when the specific tool moves.

If any of this is useful, the best thing you can do is try it. Pick the smallest piece that fits your context. Set up Storybook with axe-core. Try Think-First on a real prompt. Talk to your architect. The compound benefit comes from running the loop, not from reading about it.

FAQs

“Isn’t this just process for the sake of process?”

The four phases each solve a specific failure I’ve watched repeat across engagements. Skip strategy and you write fuzzy prompts. Skip the prompt test and you waste cycles on bad output. Skip output testing and bugs survive iterations. Each phase exists because skipping it costs something. The process is the answer to “what failed last time.”

“Won’t this slow me down compared to just vibe coding?”

Initially, slightly. After a few cycles, no. The strategy phase pays back the moment you stop generating code that has to be thrown away. The prompt test pays back the moment the agent self-corrects on its own plan. The output test pays back the moment axe-core catches a violation that would have shipped. Vibe coding feels fast because the cost shows up later, in the cleanup.

“What if my project doesn’t have Storybook?”

Then governance doesn’t live in Storybook - it lives somewhere else. The principle is what matters: the agent needs to read your standards somewhere and test against them somewhere. That could be a spec file directory, a token reference doc, a component library with prop contracts, a CI test suite, or some combination. Storybook with MCP is the tool I use because it covers the most ground at once. The point is not to skip the layer.

“Doesn’t Claude Code already do this?”

Claude Code is the execution layer. It does what it’s told and reads what’s available. Without the design system layer to read, it makes the same fabrications anything else would. The MCP server is what gives it the spec files, the components, and the test results. Without that, you’re back to vibe coding in a more expensive IDE.

“Our designers already know this stuff.”

Unwritten knowledge doesn’t transfer to AI sessions. It also doesn’t transfer to new hires, contractors, or the consultant who picks up the engagement when you roll off. Writing it into spec files and token packages puts it in version control where it gets read at the start of every AI session - and at the start of every onboarding.