Stop Vibe Coding Your IaC: Build Backpressure Instead

Trent Steenholdt

Trent Steenholdt

May 12, 2026

15 minutes to read

The problem with vibe coding in infrastructure

Vibe coding is everywhere in 2026, and without good human intervention, it produces what we are all calling “AI slop”. This post is about making sure that when you’re using tools like Claude or Codex, you’re not adding to that pile.

AI slop is bad anywhere in your DevOps lifecycle, but it’s especially damaging when it’s in your IaC. Infrastructure as Code has real consequences:

- wrong network defaults can expose workloads

- wrong module choices create drift from your platform standards

- wrong scope can fail deployment pipelines for hours

- wrong security and governance constraints can lead you to the next Cyber incident

Vibe coding is now the entry level in this new world. It’s useful for momentum, but momentum without guardrails isn’t governance, and IaC needs governance, reproducibility, and cost control.

If you find yourself prompting in a chat loop with “no, not like that” or “stop changing that file”, you’re burning tokens and getting nowhere. The model isn’t offended, isn’t persuaded, and can’t be coached by frustration. It only responds to structured context.

Shouting at an LLM isn’t feedback. It’s noise.

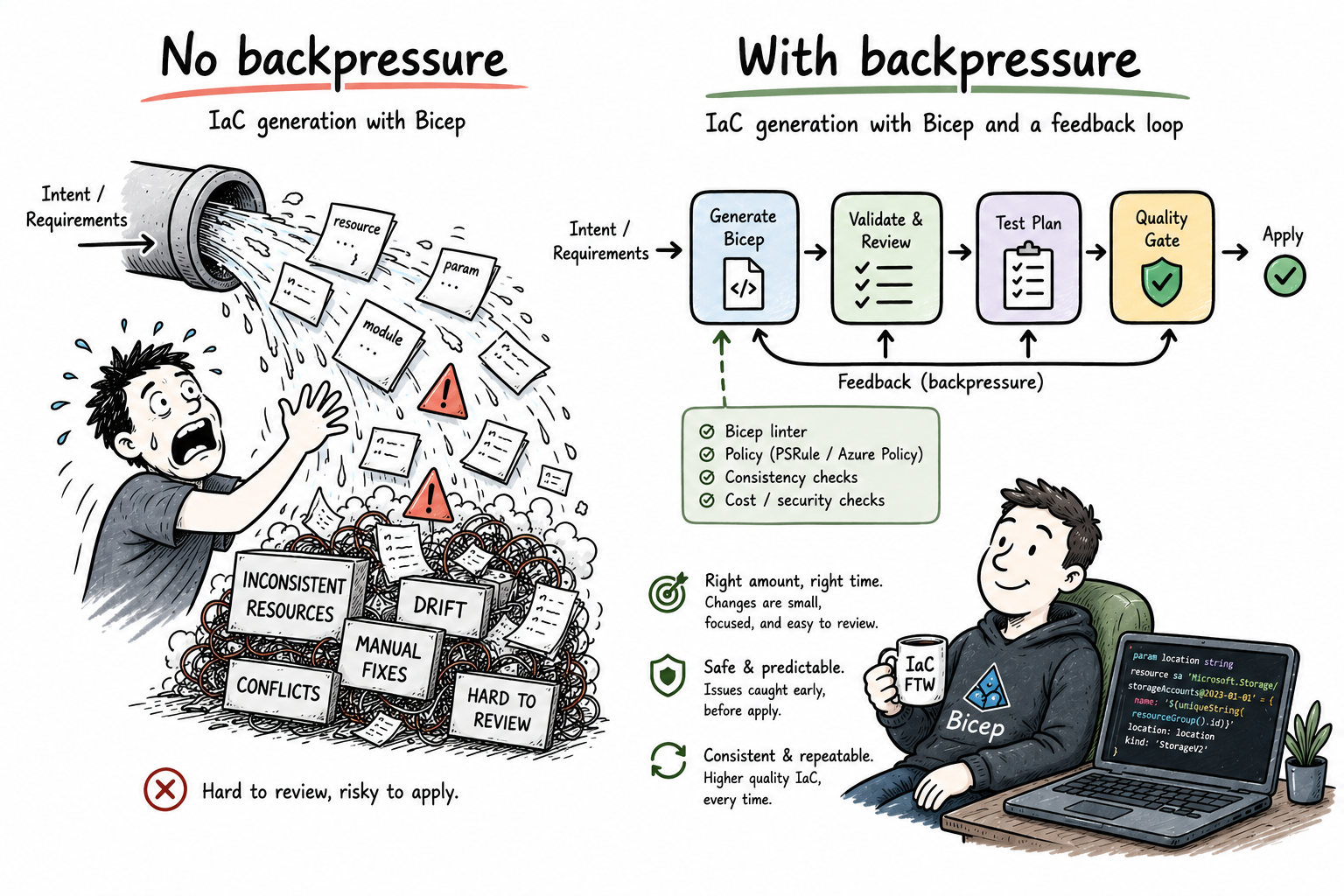

What to do instead: backpressure

First, what is “backpressure” you ask? Well like all things AI, it’s a concept borrowed from something else and in this case it’s streaming systems. When a downstream component gets overwhelmed, it signals upstream to slow down or stop until it can keep up. The pressure flows backwards through the pipeline.

In the world of AI, the same principle applies to quality instead of throughput. The validator is the downstream component. When the model produces something wrong, the validator pushes back, the model doesn’t move forward writing garbage, and nothing lands in your codebase until it passes.

In practice it is a controlled feedback loop:

- ask the model to generate IaC

- run real validators and policy checks

- feed exact violations back to the model

- regenerate with full history

- repeat until the output passes, or fail with clear reasons

That loop is the uplift from prompt engineering to agentic engineering.

You stop hoping the first answer is perfect and start building a system that converges on quality.

Why this matters for Azure, Bicep and Infrastrucuture as Code

A common failure mode is the model writing IaC as if it has tenant-level freedom. Real enterprise Azure rarely works like that.

Most teams deploy at resource group or subscription scope with role-based constraints, guardrails, and pre-approved module patterns.

A practical backpressure rule is:

- only allow

targetScope = 'resourceGroup'ortargetScope = 'subscription' - reject

managementGroupandtenantscopes unless explicitly approved

Another practical rule is:

- prefer Azure Verified Modules (AVM) over raw resource declarations for supported services

This reduces bespoke code and aligns output with tested module contracts.

IaC Backpressure Generator

An IaC Backpressure Generator produces Bicep, validates it with real tooling, then steers the model with concrete correction prompts until it passes checks. You describe what you want once and the loop handles the rest. No manual reprompting, no copy-pasting errors back in, no guessing why it keeps getting it wrong.

Flow

The validator runs against the compiled output, not the raw text. When az bicep build parses your Bicep it produces an Abstract Syntax Tree (AST). An AST is structured representation of every resource declaration, property, and scope in the file. Errors from that tree are precise: wrong type, missing required property, invalid value. That is what gets fed back to the model, not a guess from a regex.

The key is message history. Every round includes:

- the model’s previous output

- the exact errors

- a stricter correction prompt

That creates pressure in the right direction.

A note on API access

Both example scripts below call the model providers API directly. This means you will need API credits from either console.anthropic.com or platform.openai.com. An Claude.ai or ChatGPT subscription does not cover API access, they are sadly separate billing accounts.

If you don’t want to manage separate API credits, the same backpressure pattern is achievable through:

- Claude Code — included with Claude non-basic tier subscriptions. Rather than calling the API directly, you define the backpressure loop as a skill in

.claude/skills/iac-backpressure/SKILL.md. Claude Code runsaz bicep buildnatively as a bash tool, catches the violations, and retries until the output passes - all inside your existing subscription. - Codex CLI — included with ChatGPT Pro and Enterprise. Define your validation rules in

AGENTS.mdand let Codex run the loop. - Something alternative like Azure AI Foundry - access Claude or GPT through your Azure subscription using say a managed identity and customisaton of the example scripts below. No separate API credits, no keys to rotate.

The reason I chose the API approach here is to show workable, readable code that makes the loop explicit. While a SKILL.md or AGENTS.md file instructs the agent to do the same thing (and probably the easier step from vibe coder to backpressure looping), the mechanics are sadly completely hidden to you. The idea is that Python scripts make every step visible. I.e. the validate call, the steer function, the message history growing each round. Ultimately, if you want to understand how backpressure works in practice even with .md files, the code is an easier way to understand that very concept.

Using it in VS Code

Both scripts run from the VS Code integrated terminal. No extension or plugin needed.

# Claude

python generate_claude_API.py

# Codex

python generate_openai_API.py

When you run either script it prompts you for what to build:

IaC Backpressure Generator — Claude

What do you want to build? > storage account with versioning in australiaeast

Output filename (e.g. main.bicep): > storage.bicep

[Round 1] Generating...

⚠️ 1 violation(s):

• Use AVM: br/public:avm/res/storage/storage-account:0.14.3

[Round 2] Generating...

✅ Passed all checks on round 2

Written → out/storage.bicep

The output lands in out/ inside your workspace and appears in the file explorer immediately. Review, commit, done.

If you want it wired as a VS Code task so you don’t have to type the command, add .vscode/tasks.json:

{

"version": "2.0.0",

"tasks": [

{

"label": "Generate IaC (Claude)",

"type": "shell",

"command": "python generate_claude.py",

"group": "build",

"presentation": {

"reveal": "always",

"panel": "dedicated"

}

},

{

"label": "Generate IaC (Codex)",

"type": "shell",

"command": "python generate_openai.py",

"group": "build",

"presentation": {

"reveal": "always",

"panel": "dedicated"

}

}

]

}

Then it’s Ctrl+Shift+B to pick and run.

Example 1: Claude-based backpressure loop

"""

IaC Backpressure Generator — Claude

=====================================

Generates Bicep via Claude, validates with real tooling,

and steers the model with exact violations until output passes.

Requirements:

pip install anthropic

az bicep install

"""

import json

import os

import re

import subprocess

import sys

import tempfile

from pathlib import Path

from typing import NamedTuple

import anthropic

from anthropic import Anthropic

MAX_ROUNDS = 5

MAX_TOKENS = 3000

MODEL = "claude-sonnet-4-6"

OUT_DIR = Path("out")

RULES_FILE = Path("rules.json")

SYSTEM_PROMPT = """

You are an Azure IaC engineer writing production-ready Bicep.

Mandatory rules:

1. Use Azure Verified Modules (AVM) for supported resource types.

2. Allowed scopes only: resourceGroup or subscription.

3. Always include location and tags where modules support them.

4. Output Bicep only. No markdown fences. No commentary.

""".strip()

AVM_RULES = {

r"'Microsoft\.Storage/storageAccounts@": "Use AVM: br/public:avm/res/storage/storage-account:0.14.3",

r"'Microsoft\.KeyVault/vaults@": "Use AVM: br/public:avm/res/key-vault/vault:0.9.0",

r"'Microsoft\.Web/sites@": "Use AVM: br/public:avm/res/web/site:0.13.0",

}

SCOPE_DENY_PATTERNS = {

r"targetScope\s*=\s*'tenant'": "tenant scope is not allowed",

r"targetScope\s*=\s*'managementGroup'": "managementGroup scope is not allowed",

}

FILENAME_SAFE = re.compile(r"^[A-Za-z0-9._-]+$")

class Violation(NamedTuple):

source: str # "compile" | "avm" | "scope" | "custom"

message: str

def __str__(self) -> str:

return f"[{self.source}] {self.message}"

def load_custom_rules() -> list[dict]:

if not RULES_FILE.exists():

return []

data = json.loads(RULES_FILE.read_text(encoding="utf-8"))

return data.get("rules", [])

def compile_check(bicep_text: str) -> list[Violation]:

violations: list[Violation] = []

with tempfile.NamedTemporaryFile(suffix=".bicep", mode="w", delete=False, encoding="utf-8") as f:

f.write(bicep_text)

temp_path = Path(f.name)

try:

proc = subprocess.run(

["az", "bicep", "build", "--file", str(temp_path), "--stdout"],

capture_output=True,

text=True,

timeout=45,

)

if proc.returncode != 0:

for line in proc.stderr.splitlines():

if line.strip():

violations.append(Violation("compile", line.strip()))

except FileNotFoundError:

violations.append(Violation("compile", "az CLI not found"))

except subprocess.TimeoutExpired:

violations.append(Violation("compile", "az bicep build timed out"))

finally:

temp_path.unlink(missing_ok=True)

return violations

def validate(bicep_text: str) -> list[Violation]:

violations: list[Violation] = []

violations.extend(compile_check(bicep_text))

for pattern, message in AVM_RULES.items():

if re.search(pattern, bicep_text):

violations.append(Violation("avm", message))

for pattern, message in SCOPE_DENY_PATTERNS.items():

if re.search(pattern, bicep_text):

violations.append(Violation("scope", message))

if "targetScope" not in "\n".join(bicep_text.splitlines()[:4]):

violations.append(Violation("scope", "Missing targetScope near top of file"))

if "targetScope = 'resourceGroup'" not in bicep_text and "targetScope = 'subscription'" not in bicep_text:

violations.append(Violation("scope", "targetScope must be resourceGroup or subscription"))

for rule in load_custom_rules():

pattern = rule.get("pattern")

message = rule.get("message", "Custom rule failed")

if pattern and re.search(pattern, bicep_text, re.IGNORECASE):

violations.append(Violation("custom", message))

seen: set[Violation] = set()

deduped: list[Violation] = []

for v in violations:

if v not in seen:

seen.add(v)

deduped.append(v)

return deduped

def steer(violations: list[Violation], round_num: int) -> str:

heading = (

"Fix the following issues exactly."

if round_num == 1

else f"Still failing after {round_num} rounds. You MUST fix every issue below."

)

items = "\n".join([f"- {v}" for v in violations])

return (

f"{heading}\n\n{items}\n\n"

"Rewrite the full Bicep file from scratch and comply with all system rules. "

"Output Bicep only."

)

def _validate_filename(name: str) -> None:

if not name:

raise ValueError("Output filename cannot be empty")

if name in (".", ".."):

raise ValueError(f"Output filename '{name}' is not allowed")

if not FILENAME_SAFE.match(name):

raise ValueError(

f"Output filename '{name}' contains invalid characters; "

"use only letters, digits, dots, dashes, and underscores"

)

def _prompt_input(label: str, default: str | None = None) -> str:

prompt = f"{label} [{default}]: " if default else f"{label}: "

while True:

value = input(prompt).strip()

if not value and default:

return default

if value:

return value

print(" Value cannot be empty, please try again.")

def generate_with_backpressure(user_request: str, output_name: str) -> Path:

_validate_filename(output_name)

if not user_request.strip():

raise ValueError("User request cannot be empty")

client = Anthropic()

messages: list[dict] = [{"role": "user", "content": user_request}]

for round_num in range(1, MAX_ROUNDS + 1):

print(f"\n[Round {round_num}] Generating...")

resp = client.messages.create(

model=MODEL,

max_tokens=MAX_TOKENS,

system=SYSTEM_PROMPT,

messages=messages,

)

usage = resp.usage

print(f" tokens: input={usage.input_tokens} output={usage.output_tokens}")

if resp.stop_reason == "max_tokens":

print(f"⚠️ Output truncated at {MAX_TOKENS} tokens — raise MAX_TOKENS and retry")

raw = "".join(

chunk.text for chunk in resp.content if getattr(chunk, "type", "") == "text"

).strip()

violations = validate(raw)

if not violations:

print(f"\n✅ Passed all checks on round {round_num}")

OUT_DIR.mkdir(parents=True, exist_ok=True)

out_path = OUT_DIR / output_name

out_path.write_text(raw, encoding="utf-8")

print(f"Written → {out_path}")

return out_path

print(f"⚠️ {len(violations)} violation(s):")

for v in violations:

print(f" • {v}")

messages.extend([

{"role": "assistant", "content": raw},

{"role": "user", "content": steer(violations, round_num)},

])

raise RuntimeError(f"Did not converge within {MAX_ROUNDS} rounds")

def main() -> int:

if not os.environ.get("ANTHROPIC_API_KEY"):

print(

"ERROR: ANTHROPIC_API_KEY environment variable is not set.\n"

" PowerShell: $env:ANTHROPIC_API_KEY = 'sk-ant-...'\n"

" bash: export ANTHROPIC_API_KEY=sk-ant-...",

file=sys.stderr,

)

return 1

print("IaC Backpressure Generator — Claude\n")

request = _prompt_input("What do you want to build?")

name = _prompt_input("Output filename", default="main.bicep")

try:

generate_with_backpressure(user_request=request, output_name=name)

except ValueError as e:

print(f"ERROR: {e}", file=sys.stderr)

return 2

except anthropic.AuthenticationError:

print("ERROR: Invalid ANTHROPIC_API_KEY", file=sys.stderr)

return 1

except anthropic.RateLimitError as e:

print(f"ERROR: Rate limited — {e.message}", file=sys.stderr)

return 3

except anthropic.APIStatusError as e:

print(f"ERROR: API error {e.status_code}: {e.message}", file=sys.stderr)

return 4

except RuntimeError as e:

print(f"ERROR: {e}", file=sys.stderr)

return 5

return 0

if __name__ == "__main__":

sys.exit(main())

Example 2: Codex-based backpressure loop

"""

IaC Backpressure Generator — Codex

=====================================

Generates Bicep via OpenAI Codex, validates with real tooling,

and steers the model with exact violations until output passes.

Requirements:

pip install openai

az bicep install

"""

import json

import os

import re

import subprocess

import sys

import tempfile

from pathlib import Path

from typing import NamedTuple

import openai

from openai import OpenAI

MAX_ATTEMPTS = 5

MODEL = "gpt-5.4-mini"

OUTPUT_DIR = Path("out")

ORG_RULES_PATH = Path("rules.json")

SYSTEM_INSTRUCTIONS = """

You are an Azure IaC specialist.

Hard requirements:

- Produce Bicep only.

- Prefer Azure Verified Modules for supported resources.

- targetScope must be resourceGroup or subscription only.

- Include location and tags parameters for module-driven deployments.

""".strip()

RAW_RESOURCE_TO_AVM = {

r"'Microsoft\.Storage/storageAccounts@": "Use AVM module br/public:avm/res/storage/storage-account:0.14.3",

r"'Microsoft\.OperationalInsights/workspaces@": "Use AVM module br/public:avm/res/operational-insights/workspace:0.7.0",

}

SECURITY_PATTERNS = {

r"allowBlobPublicAccess\s*:\s*true": "allowBlobPublicAccess must be false",

r"minimumTlsVersion\s*:\s*'TLS1_0'": "minimumTlsVersion must be TLS1_2",

r"minimumTlsVersion\s*:\s*'TLS1_1'": "minimumTlsVersion must be TLS1_2",

r"httpsOnly\s*:\s*false": "httpsOnly must be true",

}

FILENAME_SAFE = re.compile(r"^[A-Za-z0-9._-]+$")

class Violation(NamedTuple):

source: str # "compile" | "avm" | "scope" | "security" | "custom"

message: str

def __str__(self) -> str:

return f"[{self.source}] {self.message}"

def _prompt_input(label: str, default: str | None = None) -> str:

prompt = f"{label} [{default}]: " if default else f"{label}: "

while True:

value = input(prompt).strip()

if not value and default:

return default

if value:

return value

print(" Value cannot be empty, please try again.")

def parse_rules() -> list[dict]:

if not ORG_RULES_PATH.exists():

return []

payload = json.loads(ORG_RULES_PATH.read_text(encoding="utf-8"))

return payload.get("rules", [])

def run_bicep_build(candidate: str) -> list[Violation]:

issues: list[Violation] = []

with tempfile.NamedTemporaryFile(suffix=".bicep", mode="w", delete=False, encoding="utf-8") as tmp:

tmp.write(candidate)

temp_file = Path(tmp.name)

try:

build = subprocess.run(

["az", "bicep", "build", "--file", str(temp_file), "--stdout"],

capture_output=True,

text=True,

timeout=45,

)

if build.returncode != 0:

for line in build.stderr.splitlines():

if line.strip():

issues.append(Violation("compile", line.strip()))

except FileNotFoundError:

issues.append(Violation("compile", "az CLI not available"))

except subprocess.TimeoutExpired:

issues.append(Violation("compile", "az bicep build timed out"))

finally:

temp_file.unlink(missing_ok=True)

return issues

def evaluate(candidate: str) -> list[Violation]:

findings: list[Violation] = []

findings.extend(run_bicep_build(candidate))

if "targetScope = 'managementGroup'" in candidate or "targetScope = 'tenant'" in candidate:

findings.append(Violation("scope", "only resourceGroup or subscription scopes are permitted"))

if "targetScope = 'resourceGroup'" not in candidate and "targetScope = 'subscription'" not in candidate:

findings.append(Violation("scope", "missing valid targetScope declaration"))

for pattern, message in RAW_RESOURCE_TO_AVM.items():

if re.search(pattern, candidate):

findings.append(Violation("avm", message))

for pattern, message in SECURITY_PATTERNS.items():

if re.search(pattern, candidate):

findings.append(Violation("security", message))

for custom in parse_rules():

pattern = custom.get("pattern")

message = custom.get("message", "Custom policy violation")

if pattern and re.search(pattern, candidate, re.IGNORECASE):

findings.append(Violation("custom", message))

seen: set[Violation] = set()

deduped: list[Violation] = []

for f in findings:

if f not in seen:

seen.add(f)

deduped.append(f)

return deduped

def build_retry_prompt(findings: list[Violation], attempt: int) -> str:

urgency = (

"Fix every issue below."

if attempt == 1

else f"Attempt {attempt} failed. Fix ALL issues exactly."

)

details = "\n".join([f"- {item}" for item in findings])

return (

f"{urgency}\n\n{details}\n\n"

"Regenerate the complete Bicep and output only valid Bicep text."

)

def _validate_filename(name: str) -> None:

if not name:

raise ValueError("Output filename cannot be empty")

if name in (".", ".."):

raise ValueError(f"Output filename '{name}' is not allowed")

if not FILENAME_SAFE.match(name):

raise ValueError(

f"Output filename '{name}' contains invalid characters; "

"use only letters, digits, dots, dashes, and underscores"

)

def generate_iac(request: str, filename: str) -> Path:

_validate_filename(filename)

if not request.strip():

raise ValueError("User request cannot be empty")

client = OpenAI()

history: list[dict] = [{"role": "user", "content": request}]

for attempt in range(1, MAX_ATTEMPTS + 1):

print(f"\n[Round {attempt}] Generating...")

response = client.responses.create(

model=MODEL,

instructions=SYSTEM_INSTRUCTIONS,

input=history,

)

usage = getattr(response, "usage", None)

if usage is not None:

in_tok = getattr(usage, "input_tokens", "?")

out_tok = getattr(usage, "output_tokens", "?")

print(f" tokens: input={in_tok} output={out_tok}")

candidate = response.output_text.strip()

findings = evaluate(candidate)

if not findings:

print(f"\n✅ Passed all checks on round {attempt}")

OUTPUT_DIR.mkdir(parents=True, exist_ok=True)

destination = OUTPUT_DIR / filename

destination.write_text(candidate, encoding="utf-8")

print(f"Written → {destination}")

return destination

print(f"⚠️ {len(findings)} violation(s):")

for f in findings:

print(f" • {f}")

history.extend([

{"role": "assistant", "content": candidate},

{"role": "user", "content": build_retry_prompt(findings, attempt)},

])

raise RuntimeError(f"Codex generation did not pass validation in {MAX_ATTEMPTS} rounds")

def main() -> int:

if not os.environ.get("OPENAI_API_KEY"):

print(

"ERROR: OPENAI_API_KEY environment variable is not set.\n"

" PowerShell: $env:OPENAI_API_KEY = 'sk-...'\n"

" bash: export OPENAI_API_KEY=sk-...",

file=sys.stderr,

)

return 1

print("IaC Backpressure Generator — Codex\n")

request = _prompt_input("What do you want to build?")

name = _prompt_input("Output filename", default="main.bicep")

try:

generate_iac(request=request, filename=name)

except ValueError as e:

print(f"ERROR: {e}", file=sys.stderr)

return 2

except openai.AuthenticationError:

print("ERROR: Invalid OPENAI_API_KEY", file=sys.stderr)

return 1

except openai.RateLimitError as e:

print(f"ERROR: Rate limited — {e}", file=sys.stderr)

return 3

except openai.APIStatusError as e:

print(f"ERROR: API error {e.status_code}: {e.message}", file=sys.stderr)

return 4

except RuntimeError as e:

print(f"ERROR: {e}", file=sys.stderr)

return 5

return 0

if __name__ == "__main__":

sys.exit(main())

Optional rules file

Add a rules.json next to the script to enforce your organisation’s standards without touching the Python:

{

"rules": [

{

"pattern": "targetScope\\s*=\\s*'(tenant|managementGroup)'",

"message": "Organisation policy: deployments must run at resourceGroup or subscription scope"

},

{

"pattern": "sku.*Premium",

"message": "Cost control: Premium SKU requires an approval ticket"

},

{

"pattern": "publicNetworkAccess\\s*:\\s*'Enabled'",

"message": "Networking policy: public network access must be explicitly reviewed"

}

]

}

What backpressure looks like in practice

Round 1 usually fails one or more checks.

That is expected.

A model might emit raw resources instead of AVM modules, miss secure defaults, or choose an invalid scope. With backpressure, you do not manually rewrite everything. You feed the exact violations back and let the next round correct the output.

A typical convergence pattern:

- round 1: compile failure and AVM policy breach

- round 2: compiles, but violates one security or naming rule

- round 3: passes all checks and writes artefact

Why this is better than repeated manual prompting

When people stay in vibe mode, they tend to do this:

- prompt

- get wrong output

- react emotionally or miss something important

- reprompt with vague instructions (“This is wrong, redo with AVM”)

- repeat

That burns context and tokens while producing unstable code.

Agentic backpressure is different:

- constraints are explicit

- validation is objective

- correction messages are machine-readable

- convergence is measurable

You are no longer arguing with the model (let’s face it; vibing is just that). You are operating a generation pipeline.

Final point

AI-generated IaC will always produce things you did not ask for when vibing. That’s one of the reasons we call it ‘vibing’!

Your job is not to “vibe harder” or raging at AI. Your job is to design pressure and the guardrails so the system cannot drift:

- scope policy

- scope your personal goverance

- scope how you want code written (use camelCase, not sentence case)

- AVM enforcement

- compile checks

- security checks

- organisational rules

That is how you move from entry-level prompting to reliable infrastructure delivery.

Scripts in this blog post and example Codex and Claude (agent md files) can be downloaded here.